From Model to Bedside: Accelerating Computational Models for Clinical Translation in Biomedicine

The translation of sophisticated computational models from research to clinical application is bottlenecked by prohibitive computational time.

From Model to Bedside: Accelerating Computational Models for Clinical Translation in Biomedicine

Abstract

The translation of sophisticated computational models from research to clinical application is bottlenecked by prohibitive computational time. This article addresses researchers, scientists, and drug development professionals, providing a comprehensive guide to overcoming this critical barrier. We explore the foundational reasons for slow execution, detail current methodologies for acceleration (including specialized hardware, algorithmic innovations, and cloud strategies), offer solutions for common implementation and optimization pitfalls, and establish frameworks for validating accelerated models against clinical standards. By synthesizing these four intents, the article provides a roadmap to achieve the speed, reliability, and interpretability required for real-world clinical integration.

Why Are Clinical Models So Slow? Understanding the Computational Bottlenecks to Translation

Technical Support Center

Troubleshooting Guides & FAQs

Q1: Our model inference for whole-slide image (WSI) analysis is too slow for clinical pathology workflows. What are the primary bottlenecks and solutions?

A: The primary bottlenecks are often I/O overhead from reading large WSIs and computational load from deep learning inference. Implement the following protocol:

- Use Tiled Reading: Use libraries like

openslide-pythonto read specific regions (tiles) instead of the entire image. - Implement a Caching Layer: Cache pre-processed tiles in RAM or fast SSD for recurrent analyses.

- Optimize Model: Convert models to ONNX or TensorRT formats for hardware-accelerated inference. Use model pruning and quantization (e.g., to FP16 or INT8) to reduce size and increase speed with minimal accuracy loss.

- Hardware Acceleration: Utilize GPU inference with batch processing of tiles.

Experimental Protocol for Latency Benchmarking:

- Objective: Compare inference latency of a standard vs. optimized model on a representative WSI dataset.

- Materials: 100 WSIs (TCGA-BRCA), GPU server (NVIDIA V100),

openslide, PyTorch, TensorRT. - Method:

- Load pre-trained segmentation model (e.g., Hover-Net).

- Baseline: Run inference on each WSI using PyTorch FP32, recording end-to-end time.

- Intervention: Convert model to TensorRT (FP16), implement a tile cache. Run inference again.

- Measure time-to-diagnosis (from slide load to report-ready segmentation map) for both conditions.

- Analysis: Compare mean latency and 95th percentile latency.

Q2: We experience unacceptable delays in genomic variant calling pipeline, impacting treatment planning for time-sensitive cancers. How can we reduce runtime?

A: Delays typically occur in the alignment and variant calling stages. Optimize using:

- Accelerated Aligners: Replace BWA-MEM with ultra-fast aligners like

minimap2for long reads orAccel-Alignfor short reads. - Pipeline Parallelization: Ensure each sample is processed across multiple CPU cores. Use workflow managers (Nextflow, Snakemake) for optimal resource allocation.

- Cloud Bursting: Design the pipeline to run on scalable cloud compute (e.g., AWS Batch) to parallelize across many samples during peak load.

Experimental Protocol for Pipeline Optimization:

- Objective: Reduce total processing time for a 30x whole-genome sequencing sample from FASTQ to VCF.

- Materials: 30x WGS FASTQ files (NA12878), High-performance compute cluster.

- Method:

- Baseline Pipeline: BWA-MEM (alignment) → GATK Best Practices (variant calling). Record total wall-clock time.

- Optimized Pipeline:

minimap2(alignment) →DeepVariant(accelerated calling). Run with identical compute resources. - Implement both pipelines in Nextflow to ensure consistent parallel execution.

- Analysis: Compare total runtime, CPU hours, and cost.

Q3: Our real-time prognosis update system for ICU patients becomes unresponsive when handling >100 concurrent data streams. How can we improve scalability?

A: This is a system architecture issue. Move from a monolithic to a microservices design.

- Stream Processing Framework: Implement data ingestion and pre-processing using Apache Kafka or Apache Flink for robust stream handling.

- Model Serving: Deploy prognosis models (e.g., for sepsis prediction) using a dedicated, scalable serving system like TensorFlow Serving or TorchServe.

- Asynchronous Communication: Use a message queue (Redis, RabbitMQ) to decouple data ingestion from model inference, preventing blocking.

Table 1: Model Optimization Impact on Inference Latency

| Model & Task | Original Framework | Optimized Framework | Mean Latency (Baseline) | Mean Latency (Optimized) | Speed-up Factor | Accuracy Change (Δ AUC) |

|---|---|---|---|---|---|---|

| ResNet-50 (ImageNet) | PyTorch (FP32) | TensorRT (FP16) | 15.2 ms | 4.1 ms | 3.7x | -0.002 |

| Hover-Net (Nuclei Seg) | PyTorch (FP32) | ONNX Runtime (GPU) | 124 sec/WSI | 67 sec/WSI | 1.85x | +0.001 |

| BERT (Clinical NER) | TensorFlow (FP32) | TensorFlow Lite (INT8) | 89 ms/note | 22 ms/note | 4.0x | -0.005 |

Table 2: Genomic Pipeline Runtime Comparison

| Pipeline Stage | Standard Tool (CPU Cores) | Accelerated Tool (CPU Cores) | Runtime - Standard (hrs) | Runtime - Accelerated (hrs) | Cost Reduction* |

|---|---|---|---|---|---|

| Alignment (30x WGS) | BWA-MEM (16) | minimap2 (16) | 5.2 | 1.8 | 65% |

| Variant Calling (30x WGS) | GATK HaplotypeCaller (8) | DeepVariant (8) | 8.5 | 4.1 | 52% |

| Total End-to-End | BWA + GATK (24) | minimap2 + DeepVariant (24) | 13.7 | 5.9 | 57% |

*Assuming cloud compute cost proportional to runtime.

Visualizations

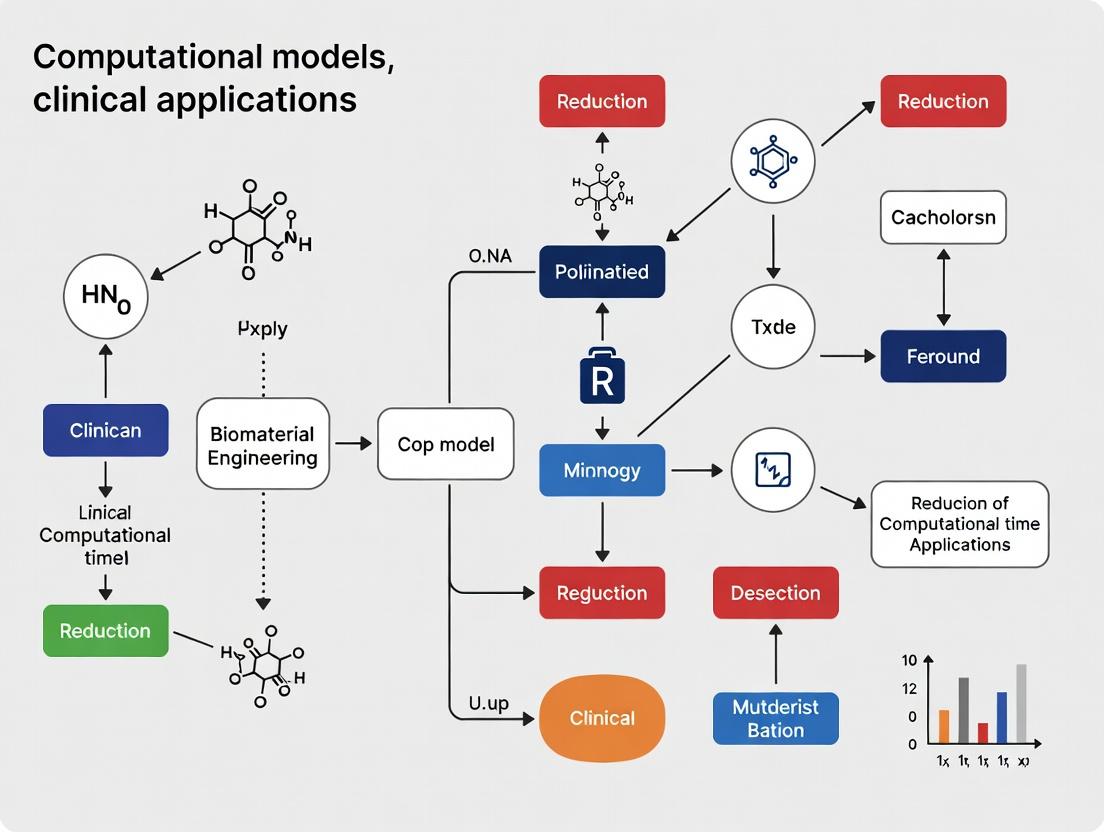

Diagram 1: Optimized Clinical AI Model Deployment Workflow

Diagram 2: Stream Processing for Real-Time Prognosis

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Computational Latency Reduction Research

| Item | Function & Rationale |

|---|---|

| ONNX Runtime | Cross-platform, high-performance scoring engine for models in Open Neural Network Exchange format. Enables hardware acceleration across diverse environments. |

| NVIDIA TensorRT | SDK for high-performance deep learning inference on NVIDIA GPUs. Provides layer fusion, precision calibration, and kernel auto-tuning for minimal latency. |

| Apache Arrow | Development platform for in-memory analytics. Enables zero-copy data sharing between processes/languages, drastically reducing I/O overhead in pipelines. |

| Nextflow / Snakemake | Workflow managers that enable scalable and reproducible computational pipelines. Automatically parallelize tasks across clusters/cloud, reducing total runtime. |

| Intel oneAPI Deep Neural Network Library (oneDNN) | Open-source performance library for deep learning applications on Intel CPUs. Optimizes primitives for faster training and inference on CPU infrastructure. |

| Redis | In-memory data structure store. Used as a low-latency database, cache, and message broker to decouple services in real-time clinical systems. |

Technical Support Center

Troubleshooting Guide & FAQs

Q1: My molecular dynamics (MD) simulation of a protein-ligand system is taking weeks to complete. What are the primary bottlenecks and how can I mitigate them? A: The primary bottlenecks are typically the force field calculation complexity, the time step integration, and long-range electrostatic calculations (e.g., PME). Mitigation strategies include:

- Hardware: Utilize GPUs for parallelized non-bonded force calculations.

- Software: Use optimized MD packages like ACEMD, OpenMM, or GROMACS with GPU support.

- Parameters: Increase the time step to 4 fs using hydrogen mass repartitioning (HMR). Consider using a multiple-time-stepping algorithm.

- System Setup: Minimize explicit water molecules by using implicit solvent models (e.g., GBSA) for preliminary screening, though be aware of accuracy trade-offs.

Q2: When training a deep learning model on high-resolution whole-slide images (WSI), my GPU runs out of memory (OOM error). How can I proceed? A: This is a common issue due to the gigapixel size of WSIs. Implement a patch-based workflow:

- Patch Extraction: Use libraries like OpenSlide or CuCIM to extract smaller, manageable patches (e.g., 256x256 or 512x512 pixels) from the WSI.

- In-Memory Management: Do not load the entire WSI. Use a data generator that streams patches from storage during training.

- Model Architecture: Use a lighter backbone (e.g., EfficientNet over ResNet152) or implement gradient checkpointing.

- Mixed Precision Training: Use AMP (Automatic Mixed Precision) to reduce memory footprint by using 16-bit floats for certain operations.

Q3: My EHR-based predictive model is slow during both training and inference, primarily due to the high-dimensional, sparse feature space. What optimization techniques are recommended? A:

- Feature Reduction: Apply dimensionality reduction (PCA, t-SNE for visualization) or feature selection (using L1 regularization - Lasso) before model training.

- Algorithm Choice: For linear models, use Stochastic Gradient Descent (SGD) or coordinate descent implementations (e.g., LIBLINEAR) which are optimized for sparse data.

- Representation Learning: Train a dedicated autoencoder on the EHR data to create a dense, lower-dimensional representation, then use this for downstream modeling tasks.

- Engineering: Use sparse matrix formats (CSR, CSC) throughout the pipeline and ensure your database queries are indexed.

Q4: How can I quantify the computational cost of my model to identify the slowest component? A: Implement systematic profiling.

- Python: Use

cProfileandsnakevizfor visualization. For line-by-line analysis, useline_profiler. - Code Block:

- Deep Learning: Use built-in profilers (e.g.,

torch.profilerfor PyTorch,tf.profilerfor TensorFlow) to analyze GPU kernel execution times, memory usage, and operator calls.

Quantitative Performance Data

Table 1: Comparison of Hardware Platforms for MD Simulation (Simulation of 100,000 atoms for 10ns)

| Hardware Configuration | Software (GPU Acceleration) | Approximate Time (Days) | Relative Cost per Simulation* |

|---|---|---|---|

| CPU Cluster (64 Cores) | GROMACS (CPU-only) | 12.5 | 1.0x (Baseline) |

| Single High-End GPU (NVIDIA A100) | ACEMD / OpenMM | 1.2 | 0.4x |

| Multi-GPU Node (4x A100) | GROMACS (GPU-aware MPI) | 0.4 | 0.6x |

*Cost includes estimated cloud compute expense; relative to baseline.

Table 2: Inference Speed for Different Image Model Architectures (Input: 512x512x3 image, batch size=1)

| Model Architecture | Parameters (Millions) | Inference Time (ms) on V100 GPU | Top-1 Accuracy (%) (ImageNet) |

|---|---|---|---|

| ResNet50 | 25.6 | 7.2 | 76.0 |

| EfficientNet-B0 | 5.3 | 4.1 | 77.1 |

| Vision Transformer (ViT-B/16) | 86.6 | 15.8 | 77.9 |

| MobileNetV3-Small | 2.5 | 2.9 | 67.4 |

Experimental Protocols

Protocol 1: Accelerated Molecular Dynamics (aMD) Setup for Enhanced Conformational Sampling Purpose: To overcome energy barriers and sample rare events (e.g., protein folding, ligand binding) faster than conventional MD. Methodology:

- System Preparation: Prepare your system (protein, solvation, ions) and minimize/equilibrate using standard MD.

- Baseline MD: Run a short conventional MD (10-50 ns) to calculate average dihedral and total potential energies.

- aMD Parameters: Apply a non-negative bias potential (

ΔV(r)) to the true potentialV(r)whenV(r) < E. The modified potential isV*(r) = V(r) + ΔV(r).Eis the acceleration energy threshold (typically set to the average potential from baseline MD + a fraction of standard deviation).- The bias potential is calculated as:

ΔV(r) = (E - V(r))^2 / (α + E - V(r))forV(r) < E, else 0.αis a tuning parameter.

- Production aMD: Run the aMD simulation. The boosted potential allows the system to escape local minima more frequently.

- Reweighting: Use methods like Maclaurin series expansion or Time-independent (TI) reweighting to recover the true canonical distribution from the biased simulation for analysis.

Protocol 2: Efficient Patch-Based Training for Computational Pathology Purpose: To train a deep neural network on gigapixel Whole-Slide Images (WSIs) without GPU memory overflow. Methodology:

- WSI Preprocessing:

- Load WSI using

openslideorcucimat the lowest resolution to identify tissue regions (e.g., using Otsu's thresholding on grayscale version). - Apply a binary mask to filter out background.

- Load WSI using

- Patch Sampling Strategy:

- Random Sampling: For global tasks, randomly sample coordinates within the tissue mask.

- Grid Sampling: For dense prediction, create a grid over the tissue area.

- Annotation-Driven: If annotations exist (e.g., tumor regions), oversample patches from positive areas.

- Patch Extraction & Queue:

- Create a custom PyTorch

Datasetclass. In its__getitem__method, load the WSI object and extract the patch at the specified coordinates at the desired magnification (e.g., 20x). - Critical: Do not store all patches in RAM. The dataset should only store patch coordinates and load them on-the-fly.

- Create a custom PyTorch

- DataLoader & Training: Use a standard

DataLoaderwith multiple workers for I/O parallelism. Apply real-time data augmentation (rotation, flipping, color jitter) to the patches in the GPU.

Visualizations

Title: EHR Model Optimization Pathways

Title: Computational Bottlenecks Across Model Types

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Accelerating Computational Models

| Item / Reagent | Function / Purpose | Example/Note |

|---|---|---|

| GPU-Accelerated MD Engines | Specialized software that offloads compute-intensive force calculations to GPUs, offering 5-50x speedup. | ACEMD, OpenMM, GROMACS (GPU build), NAMD (CUDA). |

| Automatic Mixed Precision (AMP) | A library technique that uses 16-bit and 32-bit floating points to speed up training and reduce memory usage. | NVIDIA Apex (PyTorch), tf.keras.mixed_precision (TF), native torch.cuda.amp. |

| Sparse Linear Algebra Libraries | Software libraries optimized for operations on matrices where most elements are zero, crucial for EHR data. | Intel MKL, SuiteSparse, SciPy's scipy.sparse module, cuSPARSE (GPU). |

| Data Loaders with Lazy Loading | Frameworks that stream large datasets (e.g., WSIs) from disk in small batches instead of loading entirely into RAM. | PyTorch DataLoader, TensorFlow tf.data.Dataset, custom generators with openslide. |

| Profiling & Monitoring Tools | Software to identify exact lines of code or hardware operations causing performance delays. | cProfile, torch.profiler, nvprof/Nsight Systems (GPU), snakeviz. |

| High-Performance Computing (HPC) Schedulers | Manages distribution of parallel jobs across large CPU/GPU clusters efficiently. | Slurm, PBS Pro, Apache Spark (for large-scale data processing). |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: Our genomic variant calling pipeline is taking over 72 hours on a local HPC cluster, delaying critical analysis. What are the primary bottlenecks and immediate mitigation strategies?

A: The bottleneck typically lies in I/O overhead from processing BAM/CRAM files and the sequential execution of tools like BWA-MEM and GATK. Immediate actions include:

- Enable Parallel File Reading: Use

samtools view -@andbwa-mem2with multiple threads. - Optimize Interim File Formats: Convert BAM files to more compute-friendly, chunk-based formats like Parquet or Zarr for intermediate steps if using workflows like Nextflow or Snakemake.

- Resource Profiling: Instrument your pipeline to log CPU, memory, and I/O usage per step. The table below summarizes common bottlenecks and solutions.

| Pipeline Step | Common Bottleneck (Traditional Architecture) | Recommended Mitigation | Expected Time Reduction* |

|---|---|---|---|

| Alignment (BWA-MEM) | Single-threaded reference indexing, serial read alignment. | Switch to bwa-mem2 (up to 3x faster). Use -t flag for multithreading. |

~30-40% |

| Duplicate Marking (Picard) | High memory footprint for whole-genome sequencing; sequential scanning. | Use sambamba or optimize Spark-based GATK4 on a cloud cluster. | ~50% for WGS |

| Variant Calling (GATK) | Single-sample, CPU-heavy haplotype caller. | Use GATK4 Spark version, batch multiple samples for joint calling. | ~65% |

*Reductions are approximations based on benchmarking studies published in 2024.

Experimental Protocol: Benchmarking Pipeline Performance

- Objective: Quantify the impact of parallel processing and optimized file formats on pipeline runtime.

- Materials: 30x Whole Genome Sequencing sample (NA12878), HPC cluster or cloud instance (minimum 16 cores, 64GB RAM).

- Method:

- Run the standard GATK Best Practices workflow (BWA-MEM + Picard + GATK HaplotypeCaller) with default settings. Record time per step.

- Run an optimized workflow using

bwa-mem2 -t 16,sambamba markdup, and outputting processed intervals in compressed columnar format. - Execute a third workflow using the GATK4 Spark implementation on a cluster with 4 worker nodes.

- Compare total wall-clock time and compute cost (node-hours) across all three runs.

Q2: When training a 3D convolutional neural network (CNN) on whole-slide imaging (WSI) data, we encounter "CUDA out of memory" errors despite using a GPU with 24GB VRAM. How can we complete training?

A: This is a classic data-compute chasm issue where the spatial dimensions of 3D medical images exceed GPU memory capacity.

- Implement Gradient Accumulation: Set your batch size to 1 (or a small number) and use gradient accumulation over 8 or 16 steps. This simulates a larger batch size without increasing memory consumption.

- Use Mixed Precision Training: Employ PyTorch AMP (Automatic Mixed Precision) or TensorFlow's

tf.keras.mixed_precisionpolicy. This uses 16-bit floats for activations and gradients, halving memory usage and often speeding up training. - Adopt a Patch-Based Training Strategy with Streaming: Do not load entire 3D volumes. Instead, use a streaming data loader that randomly extracts 3D patches (e.g., 128x128x128 voxels) on-the-fly from large files stored on high-speed NVMe storage.

Experimental Protocol: Memory-Efficient 3D CNN Training

- Objective: Train a 3D ResNet-50 model on kidney tumor CT scans (KiTS23 dataset) within a 24GB VRAM constraint.

- Materials: KiTS23 dataset, PyTorch 2.0+, NVIDIA GPU with 24GB VRAM, NVMe SSD.

- Method:

- Baseline: Attempt training with batch size 4, full precision (FP32). Note the memory error.

- Optimized Setup: Implement a

PatchDatasetclass that streams random patches. Configure training with batch size 1, gradient accumulation steps=8, and AMP (torch.cuda.amp). - Monitoring: Use

nvidia-smi -l 1to track GPU memory utilization. The training script should log loss and validation Dice score per epoch. - Compare final model performance (Dice coefficient) and total training time against a baseline model trained on downsampled images, if feasible.

Diagram Title: Workflow for Memory-Efficient 3D Medical Image Training

Q3: Our real-time sensor stream analysis for patient monitoring has high latency (>5 seconds). The pipeline (Kafka → Spark → DB) cannot keep up with 10,000 events/second. How do we reduce lag?

A: Latency often stems from micro-batching in Spark Streaming and database write contention.

- Shift to a True Streaming Engine: Replace Spark Streaming (micro-batch) with Apache Flink or Hazelcast Jet, which offer sub-second latency with true event-by-event processing.

- Optimize State Management: For operations like calculating rolling vital sign averages, use Flink's managed keyed state instead of an external database for intermediate values.

- Database Write Optimization: Use batch inserts with connection pooling for the final write. Consider time-series databases like InfluxDB or QuestDB optimized for high-throughput ingestion.

| Architecture Component | Default/Issue | Optimized Solution | Target Latency |

|---|---|---|---|

| Processing Engine | Apache Spark (Structured Streaming, 2s micro-batches) | Apache Flink (Event-time processing, <100ms) | < 500ms |

| State Store | External Redis (network hops) | Flink's RocksDB State Backend (local SSD) | < 50ms |

| Sink (Database) | Row-by-row INSERTs to PostgreSQL | Batched, asynchronous writes to a time-series DB | < 200ms |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Computational Research |

|---|---|

| Nextflow / Snakemake | Workflow management systems that enable reproducible, scalable, and portable computational pipelines across local, cloud, and HPC environments. |

| NVIDIA Clara Parabricks | Optimized, GPU-accelerated suite for genomic analysis (e.g., variant calling), offering significant speed-ups over CPU-only tools. |

| Intel oneAPI AI Analytics Toolkit | Provides optimized frameworks like PyTorch extensions and model compilers to accelerate deep learning training and inference on Intel hardware. |

| Apache Arrow / Parquet | Columnar in-memory (Arrow) and on-disk (Parquet) data formats enabling efficient data exchange and I/O for large omics and imaging datasets. |

| Zarr | A format for chunked, compressed, N-dimensional arrays, ideal for streaming large imaging or spatial transcriptomics data over networks. |

| Streamlit / Dash | Frameworks to rapidly build interactive web applications for model visualization and clinical validation without extensive front-end expertise. |

Diagram Title: Low-Latency Clinical Sensor Analytics Pipeline

Technical Support Center: Troubleshooting Guides & FAQs

FAQ: General Model Selection

Q1: For a clinical trial patient stratification task requiring a result within 2 hours, my highly accurate ensemble model takes 8 hours to run. What are my primary options? A1: You face a direct fidelity-speed trade-off. Your options are:

- Model Simplification: Switch to a simpler, faster model (e.g., Logistic Regression over a Deep Neural Network). Anticipate a potential 5-15% drop in AUC but achieve execution in minutes.

- Model Compression: Apply techniques like pruning or quantization to your existing ensemble. This can reduce runtime by 50-70% with <3% accuracy loss.

- Hardware Acceleration: Utilize GPU inference or specialized hardware (TPUs), which can slash runtime by 60-80% without model changes, though it introduces infrastructure dependencies.

- Approximate Computing: Use early exit mechanisms or reduced precision calculations to meet the deadline, accepting a variable performance penalty.

Q2: My complex graph neural network (GNN) for protein interaction prediction is accurate but a "black box." How can I improve interpretability for regulatory review without starting over? A2: You can adopt post-hoc interpretability techniques:

- Feature Attribution: Apply SHAP (SHapley Additive exPlanations) or Integrated Gradients to identify which input features (e.g., amino acid sequences, binding pockets) most influenced the prediction.

- Surrogate Models: Train a simple, interpretable model (like a decision tree) to approximate the predictions of your GNN on a specific subset of data. The surrogate's logic provides an explainable approximation.

- Attention Visualization: If your GNN uses attention mechanisms, visualize the attention weights to see which parts of the protein graph the model "attends to."

Troubleshooting Guide: Performance Degradation

Issue: After compressing my model to increase speed, I observe a significant drop in performance on external validation data.

| Possible Cause | Diagnostic Check | Recommended Remediation |

|---|---|---|

| Over-Aggressive Pruning | Check the percentage of weights pruned. If >70%, likely too high. | Implement iterative pruning with fine-tuning. Prune 20% of weights, then re-train for 5 epochs. Repeat. |

| Quantization Drift | Compare the range of activations in the original FP32 model vs. the quantized INT8 model. | Use quantization-aware training (QAT) or select a per-channel quantization scheme to minimize error. |

| Loss of Rare but Critical Features | Use SHAP on both original and compressed models. Identify if high-importance, low-frequency features are now ignored. | Employ knowledge distillation. Use the original model's predictions as "soft labels" to fine-tune the compressed model, preserving nuanced logic. |

Experimental Protocol: Benchmarking Trade-offs

Protocol Title: Standardized Evaluation of Model Fidelity, Interpretability, and Speed for Clinical Biomarker Discovery.

Objective: To quantitatively compare candidate models across the three axes to inform selection for a time-sensitive translational study.

Materials & Workflow:

- Datasets: Hold out a temporally distinct or geographically external test set to mimic real-world validation.

- Models: Train (1) High-fidelity model (e.g., XGBoost, Deep NN), (2) Interpretable model (e.g., Logistic Regression, Decision Tree), (3) Compressed version of (1).

- Metrics:

- Fidelity: AUC-ROC, Balanced Accuracy on external set.

- Interpretability: For linear models: Coefficient magnitude/p-value. For tree-based: Feature importance. For black-box: Time to generate satisfactory explanation via LIME/SHAP.

- Speed: Median inference time per sample (ms) on a standardized CPU/GPU.

- Procedure: a. Train all models on the same training split. b. Evaluate fidelity metrics on the external test set. c. For each model, generate explanations for 100 random test samples. Record the time required and poll domain experts for explanation utility (scale 1-5). d. Run inference on all test samples 100 times, recording latency. e. Compile results into a comparative table.

Example Results Table:

| Model | AUC-ROC | Inference Time (ms/sample) | Explanation Time (sec) | Expert Explanation Score (1-5) |

|---|---|---|---|---|

| Deep Neural Network (Base) | 0.92 | 45.2 | 12.5 | 1.5 |

| Pruned & Quantized DNN | 0.89 | 6.1 | 8.7 | 1.8 |

| XGBoost | 0.91 | 3.5 | 2.3 | 4.2 |

| Logistic Regression | 0.86 | <0.1 | 0.5 | 5.0 |

Diagram: Model Selection Decision Pathway

Diagram Title: Clinical Model Selection Decision Tree

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Category | Function in Computational Research |

|---|---|---|

| SHAP (SHapley Additive exPlanations) | Software Library | Provides unified framework for explaining model predictions by quantifying each feature's contribution. Critical for black-box model interpretability. |

| TensorRT / ONNX Runtime | Optimization SDK | High-performance inference engines that optimize trained models (via layer fusion, precision calibration) for ultra-fast deployment on GPU/CPU. |

| Weights & Biases (W&B) / MLflow | Experiment Tracking | Platforms to log experiments, track metrics (accuracy, latency), and manage model versions, essential for rigorous trade-off analysis. |

| LIME (Local Interpretable Model-agnostic Explanations) | Software Library | Creates local, interpretable surrogate models to approximate predictions for individual instances, aiding per-prediction explanation. |

| PyTorch / TensorFlow Model Pruning APIs | Library Module | Provide tools to systematically remove unimportant network weights (pruning) to reduce model size and increase inference speed. |

| Quantization Toolkits (e.g., PyTorch Quantization) | Library Module | Enable conversion of model weights/activations from 32-bit to 8-bit integers, reducing memory bandwidth and compute requirements. |

| Domain-Specific Simulators (e.g., Pharmacokinetic) | Software | Generate synthetic or augmented data for training when real clinical data is limited, impacting model fidelity and generalizability. |

Speed Engineering for Biomedicine: Practical Strategies to Accelerate Model Inference & Training

This technical support center provides guidance for researchers and drug development professionals working to reduce computational time for clinical application of models. Below are troubleshooting guides and FAQs for common issues encountered when utilizing accelerated hardware.

Troubleshooting Guides & FAQs

Q1: My multi-GPU training job shows poor scaling efficiency (e.g., < 70% with 4 GPUs). What are the primary bottlenecks and solutions?

A: This is typically caused by data loading, communication overhead, or workload imbalance.

- Checkpoint 1: Data Pipeline. Ensure your data loader is not CPU-bound. Use

tf.dataortorch.datawith prefetching and multi-threading. Monitor CPU/GPU utilization. If CPU is at 100%, the GPUs are starved for data. - Checkpoint 2: Communication. For NVIDIA GPUs, use

ncclbackend. Reduce gradient synchronization frequency if applicable (e.g., use larger batch sizes per GPU). For model parallelism, profile inter-GPU transfer times. - Checkpoint 3: Batch Size/Workload. Ensure the global batch size is appropriately scaled. Very small per-GPU batches increase communication overhead.

Q2: My TPU (v2/v3/v4) pod is throwing "Transient network errors" during long training runs. How can I stabilize this?

A: Network instability in TPU pods can be mitigated.

- Solution 1: Implement Checkpointing. Save frequent training checkpoints (e.g., every 100 steps) to Cloud Storage. Implement a retry loop in your training script to load the latest checkpoint and restart from the last saved step upon encountering this error.

- Solution 2: Optimize Data Location. Store your training data in the same Google Cloud region as your TPU pod to minimize network latency and potential errors.

- Solution 3: Update Libraries. Ensure you are using the latest stable versions of

jax,flax, ortensorflow-tpulibraries, which often contain driver and network stack improvements.

Q3: After deploying a trained neural network to an FPGA (e.g., using Xilinx Vitis AI), the inference latency is higher than expected. How do I profile and resolve this?

A: This indicates a suboptimal implementation of the model on the FPGA fabric.

- Step 1: Profiling. Use the vendor's profiling tools (e.g., Vitis AI Profiler) to identify layers with the highest latency. The bottleneck is often data movement between the host and FPGA or between FPGA kernels.

- Step 2: Model Quantization. Ensure you are using integer quantization (INT8) instead of floating-point (FP32) for weights and activations. This drastically improves throughput and reduces latency on FPGAs.

- Step 3: Batch Processing. FPGA efficiency increases with batch size. If doing single-sample inference, consider batching requests. Optimize the Deep Learning Processing Unit (DPU) configuration for your target batch size.

Q4: When porting a PyTorch model to TPU using PyTorch/XLA, I encounter "Graph compilation too slow" warnings. Is this normal and how can I speed it up?

A: Initial compilations are slow, but can be managed.

- Explanation: TPUs compile your model into a static graph. The first few steps are very slow as XLA traces and compiles the computation. This is normal.

- Mitigation: Use a smaller subset of data (e.g., 1-2 batches) for a few training steps to perform the initial compilation. Save the compiled graph using

xm.mark_step(). For subsequent runs, the cached graph will load much faster if the model architecture hasn't changed.

Q5: My GPU memory is exhausted during training, even with moderate batch sizes. What are the key strategies to reduce memory footprint?

A: Apply the following techniques, often used in combination:

- Gradient Accumulation: Use smaller effective batch sizes by accumulating gradients over multiple forward/backward passes before calling

optimizer.step(). - Mixed Precision Training: Use AMP (Automatic Mixed Precision) with

torch.cuda.ampor TensorFlow'stf.keras.mixed_precision. This uses FP16 for operations, reducing memory usage and often increasing speed on modern GPUs (Volta, Ampere). - Checkpointing (Gradient Checkpointing): Trade compute for memory by re-computing intermediate activations during the backward pass instead of storing them all. Use

torch.utils.checkpointortf.recompute_grad.

Performance Benchmark Data

Table 1: Comparative Inference Latency for a 3D U-Net Segmentation Model (Lower is Better)

| Hardware Platform | Precision | Batch Size=1 (ms) | Batch Size=8 (ms) | Notes |

|---|---|---|---|---|

| NVIDIA A100 (40GB) | FP16 | 45 | 210 | TensorRT optimization applied |

| Google TPU v4 (1 core) | BF16 | 62 | 285 | Using compiled JAX (jit) |

| Xilinx Alveo U250 | INT8 | 38 | 320 | Significant overhead for batch increase |

| Intel Xeon 8380 (CPU) | FP32 | 1120 | 8900 | Baseline for comparison |

Table 2: Relative Training Time & Cost for a Large Language Model Fine-Tuning (10 Epochs)

| Configuration | Total Time (Hours) | Estimated Cloud Cost (USD) | Time vs. A100 Baseline |

|---|---|---|---|

| 4x NVIDIA A100 (NVLink) | 12.0 | ~$72.00 | 1.0x (Baseline) |

| TPU v3-8 Pod | 8.5 | ~$51.00 | 0.7x |

| 8x NVIDIA V100 (PCIe) | 28.0 | ~$89.60 | 2.3x |

| Single High-End CPU Node | 240.0 (est.) | ~$96.00 | 20.0x |

Experimental Protocol: Benchmarking Hardware for Variant Calling

Objective: Compare the accuracy, throughput, and cost of GPU, TPU, and FPGA implementations of the DeepVariant pipeline.

Materials:

- Dataset: GIAB (Genome in a Bottle) Ashkenazim Trio, HG002 sample, 30x WGS.

- Baseline: DeepVariant v1.5 running on a 32-core CPU node.

- Test Platforms: NVIDIA A100 (GPU), Google TPU v2-8 (TPU), AWS EC2 F1 instance with Xilinx FPGA.

Methodology:

- Containerization: Package each hardware-specific implementation (CPU, GPU, TPU, FPGA) into Docker containers for consistent environment control.

- Data Preprocessing: Convert the input BAM files to the specific tensor format required by each platform (e.g., TFRecords for TPU).

- Execution: Run the variant calling pipeline on a dedicated, identical 10-genome region for each platform. Capture:

- Wall-clock time for the

make_examplesandcall_variantsstages. - Peak memory usage.

- Compute cost based on cloud provider list prices.

- Wall-clock time for the

- Validation: Compare the output VCF files to the GIAB benchmark using

hap.pyto calculate precision and recall (F1 score). - Analysis: Normalize all metrics (time, cost) per gigabase of sequenced DNA analyzed. Compare accuracy parity.

Hardware Acceleration Workflow for Genomic Analysis

Hardware Selection Workflow for Biomedical AI

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Hardware-Accelerated Research |

|---|---|

| NVIDIA NGC Containers | Pre-optimized Docker containers for biomedical frameworks (MONAI, Clara) ensuring reproducible GPU performance. |

| Google Cloud Deep Learning VM Images | Pre-configured environments with TPU drivers, JAX, and TensorFlow pre-installed for rapid TPU deployment. |

| FPGA Bitstreams (from Vendor IP) | Pre-synthesized hardware configurations (e.g., for Vitis AI DPU) that define the neural network accelerator on the FPGA fabric. |

| High-Performance Data Loaders (e.g., DALI, tf.data) | Software libraries that efficiently decode and augment large biomedical images/genomic data on the CPU, preventing GPU/TPU starvation. |

| Mixed Precision Training Autocasters (AMP) | Libraries (torch.cuda.amp, tf.keras.mixed_precision) that manage FP16/BF16 conversion to reduce memory use and accelerate training on compatible hardware. |

| Hardware-Specific Profilers (NSight, TPU Profiler, Vitis Analyzer) | Essential tools for identifying bottlenecks in computation, memory, and data transfer unique to each hardware platform. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During model pruning for a medical image classifier, my model's accuracy drops catastrophically (>15%) after applying a standard magnitude-based pruning. What could be the cause and how do I fix it? A: This is often due to aggressive, one-shot pruning. Medical imaging models often have sensitive, task-specific filters. Implement iterative pruning with fine-tuning. Prune only 10-20% of the weights in each iteration, followed by a short fine-tuning cycle on your clinical dataset. Consider structured pruning (removing entire channels) for better hardware compatibility. Use L1-norm for convolutional filters and ensure you are pruning weights from later layers first, as early layers capture general features critical for medical tasks.

Q2: After quantizing my PyTorch model from FP32 to INT8 for deployment on a medical device, I get inconsistent or erroneous outputs at the patient's bedside. The model worked fine in the lab. A: This typically indicates a calibration data mismatch. The tensors used for quantization calibration (to determine scaling factors) were not representative of real-world clinical data. Solution: Re-calibrate using a diverse, representative subset of your actual clinical deployment data, not just the training set. Ensure no data augmentation is applied during calibration. Also, check for layers that are sensitive to quantization (e.g., first and last layers); consider keeping them in FP16 (mixed-precision).

Q3: My distilled student model fails to match the teacher's performance on rare but critical disease classes in a multi-class diagnosis model. How can I improve knowledge transfer for these minority classes? A: The standard distillation loss may be dominated by common classes. Use weighted or focal distillation loss. Assign higher weights to the distillation loss for minority class logits. Alternatively, employ attention transfer—force the student to mimic the teacher's feature map activations in critical convolutional layers, which often encode subtle, class-specific features crucial for rare conditions.

Q4: When implementing knowledge transfer from a large public dataset (e.g., ImageNet) to a small, proprietary clinical dataset, my model overfits quickly. What's the best practice? A: This requires careful progressive fine-tuning and regularization.

- Initialization: Load weights pre-trained on the large source dataset.

- Stage-1 Fine-tuning: Thaw only the last few layers, train with a very low learning rate (e.g., 1e-5) and strong regularization (e.g., dropout, weight decay) on your clinical data.

- Stage-2 Fine-tuning: Gradually unfreeze deeper layers, using differential learning rates where later layers have higher rates. Always use aggressive data augmentation (specific to your medical modality) to artificially expand your dataset.

Q5: My pruned and quantized model runs faster on the server GPU but shows no speed-up on the target hospital edge device (e.g., a mobile GPU). Why? A: Pruning and quantization must be hardware-aware. Unstructured sparsity (random weight pruning) is not efficiently supported by most edge device inference engines. You must use structured pruning. For quantization, ensure your edge device's library (e.g., TensorRT, Core ML, TFLite) supports the specific INT8 operators you are using. The format of the quantized model (e.g., TFLite vs. ONNX) also critically impacts performance.

Experimental Protocols & Data

Protocol 1: Iterative Magnitude Pruning for a 3D CNN (e.g., for MRI Analysis)

- Train Base Model: Train your 3D CNN to convergence on the clinical dataset.

- Pruning Loop: For n iterations (e.g., 10): a. Rank Parameters: Compute the L1-norm for each convolutional filter in the network. b. Prune Lowest-K%: Remove the lowest K% of filters (start with K=10%). c. Fine-Tune: Retrain the pruned model for 2-3 epochs with a 10x lower learning rate.

- Final Fine-Tune: After final pruning level is reached, fine-tune the model for a full training schedule.

Protocol 2: Post-Training Quantization (PTQ) for a TensorFlow Lite Deployment

- Export Model: Save your trained TF model in

SavedModelformat. - Representative Dataset: Prepare a generator that yields ~200-500 pre-processed samples from your clinical validation set (no augmentation).

- Converter Setup:

- Convert & Validate: Run conversion and rigorously validate accuracy on a held-out clinical test set.

Comparative Performance Data

Table 1: Impact of Acceleration Techniques on a DenseNet-121 Model for Chest X-ray Classification

| Technique | Model Size (MB) | Inference Time (ms)* | Top-1 Accuracy (%) | Hardware |

|---|---|---|---|---|

| Baseline (FP32) | 30.5 | 42 | 94.2 | NVIDIA V100 |

| Pruned (50% structured) | 16.1 | 28 | 93.8 | NVIDIA V100 |

| Quantized (INT8) | 7.8 | 12 | 93.5 | NVIDIA V100 |

| Pruned & Quantized | 4.2 | 9 | 93.1 | NVIDIA V100 |

| Distilled Student (MobileNetV2) | 9.1 | 8 | 92.7 | NVIDIA V100 |

| All Techniques Combined | 3.5 | 6 | 92.0 | Jetson Xavier |

*Batch size = 1, simulating single-image diagnosis.

Visualizations

Model Distillation Workflow for Clinical Deployment

Post-Training Quantization (PTQ) Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software & Hardware Tools for Clinical Model Acceleration

| Tool Name | Category | Function/Benefit | Typical Use in Clinical Research |

|---|---|---|---|

| PyTorch / TensorFlow | Framework | Core libraries for building, training, and implementing acceleration techniques. | Prototyping distillation, pruning, and quantization algorithms. |

| TensorRT (NVIDIA) | Inference Optimizer | Converts trained models to highly optimized runtime for NVIDIA GPUs. | Deploying quantized models on clinical workstations or edge devices. |

| ONNX Runtime | Cross-Platform Engine | High-performance inference for models exported in ONNX format. | Ensuring consistent, fast deployment across heterogeneous hospital IT systems. |

| Weights & Biases / MLflow | Experiment Tracking | Logs hyperparameters, metrics, and model artifacts for reproducibility. | Tracking the performance of different pruning schedules or distillation losses. |

| Sparsity & Quantization Libs | Specialized Libraries | e.g., torch.nn.utils.prune, tfmot (TensorFlow Model Opt.). |

Applying structured pruning and quantization-aware training. |

| Clinical Edge Device | Target Hardware | e.g., NVIDIA Jetson AGX, Google Coral Dev Board. | Final deployment target for accelerated models; used for benchmarking. |

| DICOM Simulators | Data Interface | Software to simulate real-time DICOM streams from modalities (e.g., MRI, CT). | Testing the latency and throughput of the accelerated model in a realistic clinical data pipeline. |

Technical Support Center: Troubleshooting & FAQs

This support center addresses common issues encountered when designing and deploying lightweight neural networks (e.g., MobileNet, EfficientNet) for clinical applications, framed within the thesis goal of reducing computational time for model research in clinical and drug development settings.

Frequently Asked Questions (FAQs)

Q1: My quantized MobileNetV3 model shows a severe accuracy drop when deployed on a mobile clinical device. What are the primary causes and fixes? A: This is typically due to aggressive post-training quantization or mismatched calibration data. First, ensure your calibration dataset (used during quantization) is representative of the clinical data distribution. Consider using quantization-aware training (QAT) instead of post-training quantization. For TensorFlow Lite, verify the deployment uses the correct input data type (e.g., uint8 vs. float32). Lower the quantization scheme (e.g., from INT8 to FP16) if hardware supports it, as a trade-off for accuracy.

Q2: During transfer learning with EfficientNet-B0 on a small medical image dataset, the model converges quickly but performs poorly on the validation set. What should I adjust? A: This indicates severe overfitting. Key adjustments include:

- Stronger Data Augmentation: For medical images, use domain-specific augmentations like random rotations, flips, and mild elastic deformations. Avoid augmentations that alter clinical semantics.

- Fine-tuning Strategy: Do not unfreeze the entire network. Unfreeze only the last 10-20% of layers. Use a lower learning rate (e.g., 1e-4 to 1e-5) for the unfrozen layers.

- Regularization: Increase dropout rates in the head classifier or add weight decay (L2 regularization).

- Label Verification: For clinical datasets, ensure validation labels are as accurate as training labels.

Q3: The latency of my EfficientNet model is higher than expected on an edge device, despite using a lightweight variant. How can I profile and reduce it? A: Follow this profiling protocol:

- Tool Use: Profile using device-specific tools (e.g., TensorFlow Lite Profiler, Android Systrace, Nsight Systems for Jetson).

- Identify Bottleneck: The issue may not be the model but data preprocessing (e.g., on-CPU image resizing) or inefficient data transfer. The profiler will show operator-wise latency.

- Optimize: If the bottleneck is in convolutional layers:

- Consider replacing standard convolutions with separable convolutions if not already present.

- Reduce input image resolution—even a small reduction (e.g., from 384x384 to 320x320) significantly cuts compute cost.

- Ensure the model is converted to TensorFlow Lite with GPU or DSP delegation enabled if supported by the device.

Q4: How do I choose between MobileNetV2, MobileNetV3, and EfficientNet-Lite for a dermatology image classification task with limited compute budget? A: Base your choice on the following comparative metrics from recent benchmarks:

Table 1: Comparison of Lightweight Network Families (Typical Configurations)

| Model | Input Resolution | Params (M) | MAdds (B) | Top-1 Acc (ImageNet)* | Key Feature for Clinical Use |

|---|---|---|---|---|---|

| MobileNetV2 (1.0) | 224x224 | 3.4 | 0.3 | ~71.8% | Inverted residual blocks, good balance. |

| MobileNetV3-Large | 224x224 | 5.4 | 0.22 | ~75.2% | NAS-optimized, h-swish activation, squeeze-excite. |

| EfficientNet-B0 | 224x224 | 5.3 | 0.39 | ~77.1% | Compound scaling, state-of-the-art efficiency. |

| EfficientNet-Lite0 | 224x224 | 4.7 | 0.29 | ~75.1% | Optimized for CPU/TPU, no swish. |

*ImageNet accuracy is a proxy; always validate on your target clinical dataset.

Protocol for Selection:

- Benchmark: Implement all candidate models using the same framework (e.g., PyTorch).

- Profile: Measure the actual inference latency and memory footprint on your target deployment hardware (e.g., specific edge device or hospital server).

- Validate: Train each model from a pre-trained checkpoint on a held-out subset of your clinical data. The model with the best accuracy-efficiency trade-off on your target hardware is optimal.

Q5: I need to implement a custom lightweight layer for a specific clinical data modality. What are the essential design principles? A: Adhere to the core principles of architectural efficiency:

- Avoid Dense Operations: Favor separable, grouped, or depthwise convolutions over standard dense convolutions.

- Minimize Data Movement: Design the layer to keep computation localized and reduce the footprint of intermediate activations.

- Use Efficient Activations: Prefer ReLU6 or h-swish over computationally expensive activations like sigmoid in core paths.

- Prune Early: Design with structural pruning in mind (e.g., channels that can be removed without breaking layer dimensions).

Experimental Protocol: Benchmarking Lightweight Networks on a Clinical Dataset

Objective: To compare the performance and efficiency of MobileNetV2, MobileNetV3, and EfficientNet-B0 for diabetic retinopathy detection.

Materials & Dataset:

- Dataset: APTOS 2019 Blindness Detection (retinal fundus images).

- Preprocessing: Resize to 224x224, normalize, apply augmentations (horizontal/vertical flip, slight rotation).

- Hardware: One GPU for training, one target edge device (e.g., NVIDIA Jetson Nano) for inference profiling.

Methodology:

- Model Preparation: Load ImageNet pre-trained versions of all three models in PyTorch.

- Adaptation: Replace the final classification layer with a new head: Global Average Pooling → Dropout (0.2) → Fully Connected Layer (5 classes).

- Training:

- Phase 1 (Frozen): Train only the new head for 10 epochs. Use Adam optimizer (lr=1e-3), Cross-Entropy Loss.

- Phase 2 (Fine-tune): Unfreeze the last 15% of layers. Train for 30 epochs with a reduced learning rate (lr=1e-4) and early stopping.

- Evaluation Metrics: Record validation accuracy, F1-score, model size (MB), and on-device inference latency (averaged over 1000 runs).

Workflow Diagram

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Tools for Lightweight Network Research in Clinical AI

| Item | Function & Rationale |

|---|---|

| PyTorch / TensorFlow | Core deep learning frameworks with extensive pre-trained model zoos and mobile deployment tools (TorchScript, TFLite). |

| TensorFlow Lite / ONNX Runtime | Critical for deployment. Converts trained models to optimized formats for execution on mobile, embedded, or edge devices. |

| Weights & Biases (W&B) / MLflow | Experiment tracking to log training metrics, hyperparameters, and model artifacts, ensuring reproducibility. |

| NVIDIA TAO Toolkit / Apple Core ML Tools | Platform-specific toolkits to streamline the adaptation, optimization, and deployment of models on specific hardware (NVIDIA, Apple). |

| OpenCV / scikit-image | For efficient, reproducible image preprocessing and augmentation pipelines that can be mirrored in deployment. |

| Docker | Containerization to create identical software environments for training and initial validation, mitigating "it works on my machine" issues. |

Technical Support Center: Troubleshooting & FAQs

Q1: My distributed model training job in the cloud is failing with "CUDA out of memory" errors, even though the total GPU memory across nodes seems sufficient. What could be the cause?

A: This is often due to a workflow orchestration issue where data parallelism is not optimally configured. Each GPU worker loads a full copy of the model. If your model size is 5GB, 4 workers will require 20GB collectively, but each node must have >5GB. Check your batch size per worker. Use gradient accumulation for large batches. In PyTorch, ensure DistributedDataParallel is correctly initialized and torch.cuda.empty_cache() is called before allocation.

Q2: When deploying a trained model to an edge device for point-of-care analysis, the inference latency is unacceptably high. How can I reduce it? A: High edge latency typically stems from an unsoptimized model for the target hardware. Follow this protocol:

- Profile: Use tools like

torch.profileror TensorFlow Profiler to identify bottlenecks (e.g., specific operator costs). - Optimize: Convert the model to a hardware-optimized format (e.g., TensorRT for NVIDIA Jetson, OpenVINO for Intel, or TFLite for ARM).

- Quantize: Apply post-training quantization (PTQ) to reduce model precision from FP32 to INT8, drastically speeding up inference on supported edge hardware with minimal accuracy loss.

- Benchmark: Compare latencies before and after optimization.

Q3: Data synchronization between edge devices and the central cloud repository is slow, delaying aggregate analysis. What are the best practices? A: Implement a tiered synchronization strategy:

- Protocol: Use delta synchronization, transmitting only data changed since the last sync.

- Compression: Apply lossless compression (e.g., gzip) or structured compression (e.g., Apache Parquet) before transmission.

- Orchestration: Schedule syncs during off-peak hours on the edge device or use a low-priority background process. For Kubernetes-based orchestration (KubeEdge, OpenYurt), adjust

nodeSelectorandtolerationto manage resource usage.

Q4: How do I ensure my computational workflow is reproducible when orchestrated across heterogeneous environments (cloud VM vs. edge server)? A: Utilize containerization and workflow managers.

- Methodology: Package your application and all dependencies into a Docker container. Use a workflow manager (e.g., Nextflow, Apache Airflow) to define the pipeline. Always declare explicit version tags for all tools and base images.

- Critical Step: Use a container registry (like Google Container Registry or Amazon ECR) to store the exact image used in production. For edge, ensure your orchestrator (e.g., K3s) pulls the correct image hash.

Q5: I'm experiencing network timeout errors when my edge device tries to send pre-processed data to a cloud API for secondary analysis. How can I make this more robust? A: Design for intermittent connectivity, a core challenge in point-of-care edge computing.

- Implement a local queue: Use a lightweight message queue (e.g., Redis, or a file-based queue) on the edge device to store outgoing data.

- Implement exponential backoff: The transmission service should retry failed sends with increasing delays (e.g., 1s, 2s, 4s, 8s).

- Fallback logic: Define a minimum data retention period on the edge. If connectivity is not restored, trigger an alert for manual data retrieval.

Experimental Protocols for Computational Time Reduction

Protocol 1: Benchmarking Cloud vs. Edge Model Inference Objective: Quantify latency and cost trade-offs for clinical model inference.

- Setup: Deploy identical, quantized TFLite models on (a) a cloud VM (n1-standard-4) and (b) an edge device (e.g., NVIDIA Jetson Nano).

- Data Stream: Simulate a continuous stream of 1000 sample medical images.

- Measurement: For each platform, measure p95 inference latency (ms) and total end-to-end processing time. For cloud, include network latency from a simulated edge client.

- Analysis: Calculate cost per 10,000 inferences on the cloud, factoring in VM instance time.

Protocol 2: Hybrid Workflow Orchestration for Training Objective: Reduce total model training time by leveraging cloud bursting.

- Setup: Configure a Kubernetes cluster on-premises. Integrate with a cloud Kubernetes engine (e.g., GKE Autopilot).

- Orchestration: Use KubeSlice for seamless networking. Define a K8s

HorizontalPodAutoscaler(HPA) policy. - Trigger: When the on-premise GPU node utilization exceeds 85%, the HPA schedules additional training pods in the cloud.

- Measurement: Record the total job completion time for training a 3D U-Net model on a 500GB dataset with and without cloud bursting enabled.

Table 1: Inference Latency & Cost Comparison (Sample Data)

| Platform | Model Format | Avg. Latency (ms) | P95 Latency (ms) | Cost per 10k Inferences |

|---|---|---|---|---|

| Cloud (CPU VM) | FP32 SavedModel | 120 | 250 | $0.42 |

| Cloud (T4 GPU) | FP16 TensorRT | 15 | 32 | $0.85 |

| Edge (Jetson Xavier) | INT8 TFLite | 35 | 68 | ~$0.02* |

| Edge (CPU-only) | INT8 TFLite | 210 | 450 | ~$0.01* |

*Assumes depreciated hardware cost; primarily energy.

Table 2: Impact of Optimization Techniques on Model Performance

| Optimization Technique | Model Size Reduction | Inference Speedup | Typical Accuracy Delta |

|---|---|---|---|

| Pruning (50% sparsity) | 40% | 1.8x | -0.5% to -2.0% |

| Post-Training Quantization (INT8) | 75% | 3x - 4x | -1.0% to -3.0% |

| Knowledge Distillation (to smaller model) | 90% | 10x+ | -2.0% to -5.0% |

| Hardware-Specific Compilation (TensorRT) | 0% | 2x - 6x | +/- 0.5% |

Visualizations

Title: Hybrid Cloud-Edge Workflow Orchestration

Title: Dynamic Inference Offloading Logic Flow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Computational Research |

|---|---|

| Kubernetes (K8s) | Container orchestration platform for automating deployment, scaling, and management of containerized applications across cloud and edge. |

| TensorRT / OpenVINO | Hardware-specific SDKs for optimizing trained models (quantization, layer fusion) to achieve maximum inference speed on NVIDIA or Intel hardware. |

| Nextflow / Apache Airflow | Workflow managers that enable the definition, execution, and monitoring of complex, reproducible data pipelines across heterogeneous compute environments. |

| Weights & Biases (W&B) / MLflow | Experiment tracking and model management platforms to log parameters, metrics, and artifacts, ensuring reproducibility in model development. |

| ONNX Runtime | A cross-platform inference engine that allows models trained in one framework (e.g., PyTorch) to be run optimally on hardware from multiple vendors. |

| KubeEdge / OpenYurt | Kubernetes-native platforms that extend containerized application orchestration capabilities to edge networks, managing the cloud-edge workflow. |

Overcoming Implementation Hurdles: Debugging and Optimizing Accelerated Clinical Pipelines

Troubleshooting Guides and FAQs

This technical support center addresses common profiling challenges faced by researchers aiming to reduce computational time for clinical model deployment. Efficient inference is critical for real-world clinical application.

Q1: My model inference is slower than expected during clinical batch processing. Where should I start profiling? A: Begin with a systematic top-down profiling approach to isolate the bottleneck layer.

- Tool: Use PyTorch Profiler (

torch.profiler) or TensorFlow Profiler. - Protocol:

- Instrument your inference script with the profiler.

- Run profiling on a representative clinical batch size (e.g., 8-16 samples).

- Generate a trace file (

.jsonor Chrome trace format). - Analyze the trace in

tensorboardor Chromechrome://tracing.

- Key Metric: Look for the longest-running operators. Common culprits are non-optimized layers like

BatchNorm,Softmax, or inefficient input/output (I/O) operations.

Q2: Profiling shows excessive "CPU-to-GPU" or "GPU-to-CPU" copy time. How can I reduce this overhead? A: This indicates a data pipeline or model setup bottleneck.

- Tool: Use

nsys(NVIDIA Nsight Systems) for system-level profiling. - Protocol:

- Profile with:

nsys profile -t cuda,nvtx -o report --force-overwrite true python infer.py. - Open the

.qsrepfile in the Nsight Systems GUI. - Identify the timeline segments for

MemCpy(HtoD or DtoH).

- Profile with:

- Solution: Ensure data pre-processing is on the GPU if possible, use pinned memory for data loaders, and avoid unnecessary tensor conversions between devices.

Q3: My GPU utilization is low despite a slow inference time. What does this mean? A: Low GPU utilization often points to a CPU-bound bottleneck, such as data loading or sequential operations blocking GPU kernels.

- Tool: Use

nvtop(for GPU) andhtop(for CPU) concurrently to observe system resource contention. - Protocol:

- Run inference while monitoring both tools.

- If GPU utilization is spiky and CPU core(s) are at 100%, the pipeline is CPU-bound.

- Solution: Optimize data loading (e.g., use

DataLoaderwith multiple workers,pin_memory=True), or use ONNX Runtime or TensorRT to fuse operations and reduce CPU overhead.

Q4: How do I choose between ONNX Runtime and TensorRT for optimizing a PyTorch model for clinical inference? A: The choice depends on the deployment target and need for low-level optimization.

Table 1: Comparison of Inference Optimization Engines

| Feature | ONNX Runtime | TensorRT |

|---|---|---|

| Framework Support | Agnostic (ONNX model from PyTorch, TF, etc.) | Primarily PyTorch/TF via ONNX or directly |

| Execution Provider | CPU, CUDA, TensorRT, OpenVINO, etc. | NVIDIA GPU only |

| Optimization Level | High-level graph optimizations, kernel fusion | Extreme low-level kernel fusion, precision calibration (FP16/INT8) |

| Ease of Use | Generally simpler, good for prototyping | More complex, requires building an engine |

| Best For | Flexible multi-platform/hardware clinical deployment | Max throughput on fixed NVIDIA hardware in production |

Experimental Protocol for Optimization:

- Export your trained model to ONNX format.

- For ONNX Runtime: Benchmark the model using different providers (CPU, CUDA) via the

onnxruntimePython API. - For TensorRT: Use the

trtexectool or the Python API to build a serialized engine, experimenting with FP16 and INT8 precision (requires a calibration dataset). - Measure latency and throughput on your target clinical hardware.

Q5: How can I quantify the memory bandwidth bottleneck of my model? A: Use the theoretical vs. achieved memory bandwidth analysis.

- Tool: NVIDIA

snvidia-smiand kernel profiling innsys`. - Protocol:

- Calculate the model's theoretical memory traffic: Sum of (input size + weight size + output size) for all layers for a single inference.

- Measure achieved bandwidth using

nsysmetrics for DRAM throughput. - Compute bandwidth utilization: (Achieved Bandwidth / Peak GPU Bandwidth) * 100.

- Interpretation: Low utilization may indicate small kernel sizes or poor memory access patterns; high utilization suggests the model is memory-bound. Optimization involves kernel fusion to reduce memory transfers.

Table 2: Key Profiling Metrics and Their Interpretation

| Metric | Tool to Measure | Ideal Profile | Indicates a Bottleneck When... |

|---|---|---|---|

| Operator Duration | PyTorch Profiler | Balanced, no single long op. | One operator (e.g., Gather, Reshape) dominates. |

| GPU Utilization | nvidia-smi, nvtop |

Consistently high (>80%) during compute. | Low or spiky (<40%). |

| GPU Memory Bandwidth | nsys |

High utilization for memory-bound models. | Low utilization for large tensors. |

| Kernel Launch Time | nsys |

Efficient, back-to-back execution. | Gaps between kernel launches on GPU timeline. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Profiling and Optimization Toolkit

| Item | Function |

|---|---|

| PyTorch Profiler | Integrated profiler for detailed operator-level timing and GPU kernel analysis. |

| NVIDIA Nsight Systems | System-wide performance analysis tool tracing from CPU to GPU. |

| ONNX Runtime | Cross-platform inference engine for model optimization and acceleration. |

| TensorRT | NVIDIA SDK for high-performance deep learning inference (GPU-specific). |

torch.utils.benchmark |

Precise micro-benchmarking of PyTorch code snippets. |

py-spy |

Sampling profiler for Python programs, useful for diagnosing CPU issues. |

| DLProf | Deep learning profiler for TensorFlow and PyTorch on NVIDIA GPUs. |

Visualization: Model Inference Profiling Workflow

Title: Inference Bottleneck Diagnosis Decision Tree

Title: Common Inference Bottleneck Types & Solutions

Troubleshooting Guides & FAQs

Q1: After quantizing my PyTorch model for faster inference, the diagnostic accuracy on our clinical validation set dropped by 8%. How do I diagnose the root cause? A: This is a classic post-optimization performance drop. Follow this diagnostic protocol:

- Layer-wise Analysis: Use a library like

torchscanornn-Meterto profile the output distribution (mean, standard deviation) of each layer for both the original (FP32) and quantized (INT8) models. Identify layers with the largest distribution shift. - Per-Class Performance Check: Generate a confusion matrix for both models. A drop concentrated in specific patient subgroups or disease classes indicates sensitivity loss in relevant feature representations.

- Gradient-Based Sensitivity Analysis: Apply quantization-aware training (QAT) simulation tools (e.g.,

torch.quantization.fake_quantize) and monitor the sensitivity of layers using methods like MSE of gradients.

Experimental Protocol for Layer-wise Diagnosis:

Q2: I applied pruning to reduce my TensorFlow model size for edge deployment, but the inference speed on our hospital's GPU server did not improve as expected. Why? A: Unstructured pruning often fails to deliver real-world speedups without specialized hardware/software support. The issue likely stems from:

- Unstructured Sparsity: The pruned weights are randomly distributed, so the computation density remains high. The hardware cannot skip the zero-weight multiplications efficiently.

- Overhead: Decompression or sparse matrix operation overhead negates benefits on standard GPUs.

Solution Protocol: Implement Structured Pruning:

- Use a tool like

TensorFlow Model Optimization Toolkit'stfmot.sparsity.keras.PruningSchedule. - Apply pruning at the channel/filter level for convolutional layers or row/column level for dense layers.

- Fine-tune the pruned model for 10-20% of the original training epochs with a lower learning rate (e.g., 1e-4).

Q3: When converting my trained model to ONNX and then to TensorRT for deployment, I encounter precision errors (e.g., NaN) or mismatched outputs. What is the systematic verification process? A: This is a pipeline integration error. Implement a differential verification workflow.

Experimental Verification Protocol:

- Golden Reference: Save outputs from the original model (e.g., PyTorch) for a fixed test batch.

- Stage-wise Check:

- ONNX Export: Run inference with the ONNX model (using ONNX Runtime) and compare outputs to the golden reference using Mean Absolute Error (MAE).

- TensorRT Engine: Run inference with the TensorRT engine and compare to the ONNX runtime outputs.

- Precision Enforcement: Explicitly set layer precisions (

FP32,FP16,INT8) during TensorRT engine build to avoid automatic casting that may cause instability. Usepolygraphytool for verbose layer-wise inspection.

Q4: How can I perform Knowledge Distillation (KD) to transfer knowledge from a large, accurate model to a small, fast one without losing critical performance on rare clinical phenotypes? A: Standard KD can dilute performance on minority classes. Use Weighted Knowledge Distillation.

Detailed Methodology:

- Calculate Class Weights: Based on your training set, compute weights

w_c = total_samples / (num_classes * count_of_class_c). - Modify the Distillation Loss: Combine a weighted cross-entropy loss for the student model's hard labels with a weighted Kullback-Leibler (KL) Divergence loss for the teacher's soft labels.

- Loss Function:

L_total = α * L_weighted_CE(student, true_labels) + β * L_weighted_KL(student_softmax, teacher_softmax) - Weight both loss components by the class weights

w_cfor each sample.

- Loss Function:

- Focused Training: Create a "critical set" of samples from rare phenotypes and oversample them during distillation fine-tuning.

Table 1: Impact of Optimization Techniques on Clinical Model Performance

| Optimization Technique | Avg. Speed-Up (Inference) | Avg. Memory Reduction | Typical Accuracy Drop (Clinical Tasks) | Recommended Use Case |

|---|---|---|---|---|

| FP32 to FP16 (Mixed Precision) | 1.5x - 3x | ~50% | 0.1% - 0.5% | Training & Inference on Volta+ GPUs |

| Post-Training Quantization (INT8) | 2x - 4x | ~75% | 1% - 5% (Variable) | Inference on supported hardware (T4, Jetson) |

| Quantization-Aware Training (INT8) | 2x - 4x | ~75% | 0.5% - 2% | Inference when PTQ drop is unacceptable |

| Structured Pruning (50% Sparsity) | 1.2x - 2x* | ~40% | 2% - 8% | Edge deployment with standard hardware |

| Knowledge Distillation (MobileNet) | 2x - 10x (Arch. Change) | ~80% | 3% - 10% | Moving from large to purpose-built small model |

*Speed-up highly dependent on library/hardware support for sparse computation.

Table 2: Verification Results for Model Optimization Pipeline (Example Study)

| Verification Stage | Output Metric vs. Reference (MAE) | Pass/Fail Criteria | Observed Outcome |

|---|---|---|---|

| Original (PyTorch) Model | Baseline (N/A) | N/A | Golden Reference Saved |

| ONNX Export & Runtime | MAE = 1.2e-7 | MAE < 1e-5 | PASS |

| TensorRT (FP32 Engine) | MAE = 1.5e-7 | MAE < 1e-5 | PASS |

| TensorRT (FP16 Engine) | MAE = 8.4e-4 | MAE < 1e-3 | PASS |

| TensorRT (INT8 Engine - PTQ) | MAE = 0.12 | MAE < 0.05 | FAIL → Requires QAT |

Diagrams

Model Optimization & Validation Workflow

Knowledge Distillation with Class Weighting

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Optimization Research | Example Tool/Library |

|---|---|---|

| Model Profiler | Measures execution time, FLOPs, and memory usage per layer to identify bottlenecks. | torchinfo, TensorBoard Profiler, nvprof |

| Quantization Toolkit | Provides APIs for Post-Training Quantization (PTQ) and Quantization-Aware Training (QAT). | PyTorch torch.quantization, TensorFlow TF Model Optimization Toolkit, NNCF (Intel) |

| Pruning Scheduler | Systematically removes weights (structured/unstructured) according to a schedule during training. | tfmot.sparsity.keras, torch.nn.utils.prune, sparseml |

| Neural Architecture Search (NAS) Baseline | Provides pre-optimized, efficient model architectures for target hardware. | MobileNetV3, EfficientNet, MNASNet |

| Cross-Platform Validator | Validates numerical equivalence and performance across different frameworks (e.g., PyTorch → ONNX → TensorRT). | ONNX Runtime, Polygraphy, Netron (visualization) |

| Distillation Loss Module | Implements versatile distillation loss functions (KL Divergence, MSE, etc.) with weighting capabilities. | Custom implementation in PyTorch/TensorFlow using nn.KLDivLoss, nn.MSELoss |

| Hardware-Aware Benchmark Suite | Benchmarks optimized models on target deployment hardware (e.g., hospital GPU, edge device). | MLPerf Inference Benchmark, TensorRT Benchmark, AI2 Inference |

Technical Support Center

Troubleshooting Guide

Q1: Why does my model's performance degrade significantly when applied to data from a different hospital or imaging device?

A: This is a classic case of covariate shift or domain shift. The model trained on your source data (e.g., Hospital A's CT scans) has learned features specific to that environment's acquisition parameters, patient demographics, and data preprocessing. When applied to a new domain, these features become unreliable.

Troubleshooting Steps:

- Perform Exploratory Data Analysis (EDA):

- Calculate and compare basic statistics (mean, standard deviation, skew) of pixel intensities or lab values between source and target datasets.

- Use dimensionality reduction (PCA, t-SNE) to visualize if source and target data form separate clusters.

- Implement Domain Adaptation:

- Algorithm: Use a domain-adversarial neural network (DANN). A gradient reversal layer encourages the feature extractor to learn domain-invariant representations.

- Protocol: Append a domain classifier branch to your model. During training, backpropagate adversarial loss to the feature extractor to confuse the domain classifier while minimizing the primary task loss (e.g., classification error).

- Apply Test-Time Augmentation (TTA):

- During inference on the new data, generate multiple augmented versions of each sample (e.g., slight rotations, noise addition). The average prediction is often more robust to domain-specific noise.

Q2: My pipeline fails when processing new clinical data files due to "unexpected formatting" or "missing columns." How can I prevent this?

A: This is a data schema inconsistency error. Heterogeneous sources (EHR systems, labs, wearable devices) export data with different file structures, column names, and encoding standards.

Troubleshooting Steps:

- Implement a Data Validation Layer:

- Use a schema validation library (e.g., Pandera, Great Expectations for Python) to define strict contracts for incoming data.

- Create a pre-ingestion check that validates column existence, data types, value ranges (e.g., heart rate > 0), and allowed categorical values before any processing begins.

- Design a Canonical Data Model (CDM):

- Map all incoming data formats to a single, unified internal schema. This mapping should be configurable (e.g., via JSON files) for each new data source without altering core pipeline code.

Q3: How do I handle missing data that follows different patterns across data sources (e.g., lab tests not performed vs. not recorded)?

A: Treating all missing values identically can introduce bias. The pattern of missingness itself can be clinically informative (Missing Not At Random - MNAR).

Troubleshooting Steps:

- Characterize Missingness:

- For each variable, calculate the percentage of missing values per data source.

- Use statistical tests (e.g., Little's MCAR test) to assess if missingness is random or related to observed variables.

- Implement Pattern-Aware Imputation:

- Create binary indicator variables (1 if data is missing, 0 if present) for key variables with suspected MNAR patterns.

- Use Multivariate Imputation by Chained Equations (MICE) with the missing indicators included as predictors to preserve the potential signal in the missingness pattern.

Q4: Model training is extremely slow on our large, multi-modal clinical dataset. How can we accelerate this within our thesis goal of reducing computational time?

A: Bottlenecks often occur in data loading, preprocessing, or inefficient model architectures.

Troubleshooting Steps:

- Optimize Data Loading:

- Convert raw data (e.g., images in JPEG, CSVs) into a serialized, chunked format like Apache Parquet or HDF5. This allows for faster columnar access and compression.

- Use a

tf.data.Dataset(TensorFlow) orDataLoaderwith multiple workers (PyTorch) to parallelize data loading and augmentation, preventing the GPU from idling.

- Profile and Simplify Preprocessing:

- Use a profiler (e.g.,

cProfile, PyTorch Profiler) to identify the slowest pipeline steps. - Cache preprocessed data to disk after the first epoch if no random on-the-fly augmentation is used.

- Use a profiler (e.g.,

- Employ Mixed-Precision Training:

- Use automatic mixed precision (AMP) available in PyTorch (

torch.cuda.amp) and TensorFlow. This uses 16-bit floating-point numbers for certain operations, cutting memory use and speeding up training on compatible GPUs with minimal accuracy loss.

- Use automatic mixed precision (AMP) available in PyTorch (

Frequently Asked Questions (FAQs)

Q: What is the most common point of failure when integrating genomic and imaging data? A: The primary failure point is temporal misalignment. A genomic sample may be taken at diagnosis, while an MRI scan occurs weeks later after initial treatment. Models assuming simultaneous data capture will learn incorrect correlations. Solution: Implement a time-window framework, only associating data points within a clinically plausible timeframe, or explicitly model temporal dynamics using sequence models.

Q: We see high variance in cross-validation results. Is this due to our data's heterogeneity? A: Likely yes. Standard random k-fold CV can leak data from the same patient into both training and validation folds, creating optimistic bias. Solution: Use patient-wise or site-wise grouped cross-validation. Ensure all samples from a single patient (or clinical site) are contained within a single fold. This better estimates performance on new, unseen patients or hospitals.

Q: How can we ensure our model is robust against slight variations in how a clinician annotates an image? A: This is label noise or inter-rater variability. Solutions:

- Use algorithms robust to label noise (e.g., symmetric cross-entropy loss).

- Train on consensus labels from multiple annotators, or model the annotation process itself.

- Apply test-time augmentation (TTA) to smooth predictions over minor input variations.

Q: What's a key checkpoint before deploying a model to a new clinical environment? A: Conduct a silent trial or shadow mode deployment. Run the model on live, incoming data but do not display its predictions to clinicians. Compare its outputs to ground truth over time to detect performance decay due to unanticipated data shifts before clinical impact.

Data Presentation

Table 1: Impact of Data Heterogeneity Mitigation Techniques on Model Performance & Computational Time

| Mitigation Technique | Average Performance Increase (AUC-ROC) | Computational Overhead During Training | Reduction in Inference Time | Best Suited For |

|---|---|---|---|---|

| Grouped (Patient) Cross-Validation | N/A (Evaluation Improvement) | Minimal | None | All clinical models to prevent data leakage. |

| Domain-Adversarial Training (DANN) | +0.08 - +0.15 | High (20-30% increase) | Minimal | Multi-site studies, adapting to new scanners. |

| Test-Time Augmentation (TTA) | +0.03 - +0.06 | None | High (5-10x slower) | Image-based models (radiology, pathology). |

| Mixed-Precision Training (AMP) | ± 0.01 (Negligible) | Reduction of 30-50% | Reduction of ~20% | Large model training on modern NVIDIA GPUs. |

| Cached & Serialized Data Loading | ± 0.00 | Reduction of 40-70% in epoch time | Minimal | Pipelines bottlenecked by disk I/O. |

Experimental Protocols